You have spent six months meticulously refining every trace, every via, and every decoupling capacitor. Your Schematic Capture is a work of art: a hierarchical masterpiece of engineering logic. You finally hit "export," send the files to the board house, and lean back with a sense of accomplishment. Then, the email arrives. The dread sets in before you even open it. Your lead power management IC is "Out of Stock - No EOL Date." The secondary microcontroller? 52-week lead time. Your launch schedule evaporates in an instant. pcb design consultant

This is the silent killer of modern hardware development: supply chain volatility. It is an insidious force that turns a perfect design into a useless stack of fiberglass and copper. The heartburn of telling a stakeholder that the prototype is delayed by a year because of a single missing $0.50 component is a catastrophic failure of the traditional design-then-procure workflow.

The board is dead on arrival. The project has stalled. The market window has closed.

The Mechanics of Data Entropy in the Supply Chain

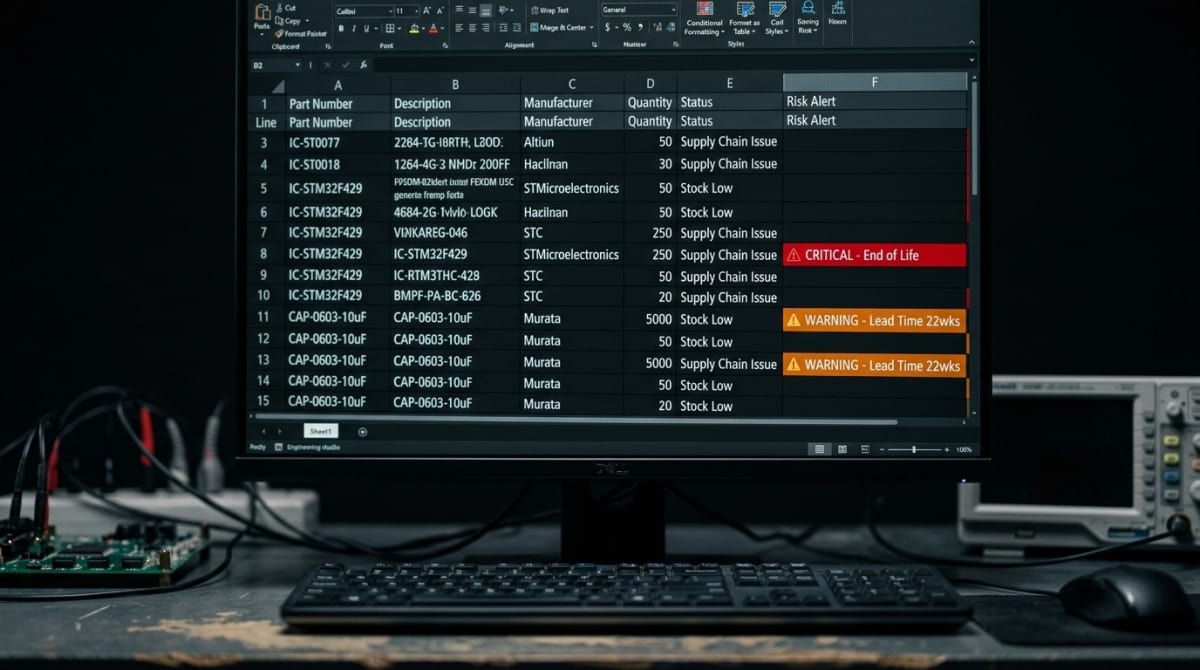

The core problem isn't just a lack of parts; it is a breakdown in the metallurgical and logistical data pipeline. A Bill of Materials (BOM) is not a static document; it is a living, breathing set of dependencies subject to the harsh laws of global entropy. Traditional BOM management relies on human engineers manually checking distributor websites or relying on outdated library data. This is the wrong way.

In the time it takes to meticulously verify a 200-line BOM, the market has already shifted. Prices fluctuate, inventory "ghosts" disappear, and technical specifications are updated. This monolithic approach to component selection is a psychological reflex born of a slower era. Today, the gap between a schematic and a production-ready file is bridged not by manual effort, but by algorithmic intelligence.

Generative AI: The New Digital Metallurgist pcb design consultant

At Circuit Board Design, we recognize that the future of Electronics Engineering lies in Generative BOM Optimization. By leveraging Large Language Models (LLMs) and Retrieval-Augmented Generation (RAG), we are moving away from reactive component sourcing and toward proactive, resilient selection.

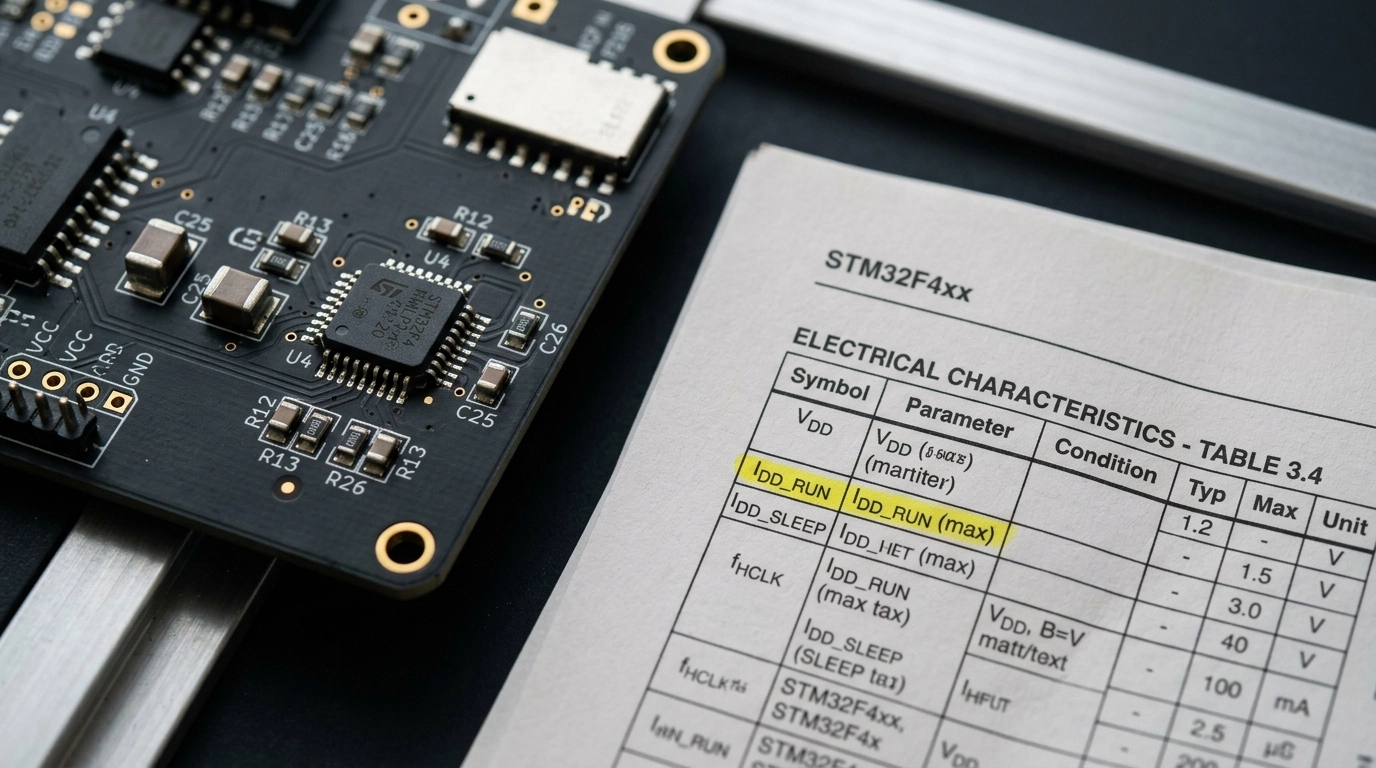

LLMs are uniquely suited to understand the nuances of component datasheets. While a traditional database search looks for exact part numbers, an LLM-driven system can understand the intent of a component. It analyzes thermal tolerances, electrical characteristics, package constraints, qualification grades, and sourcing patterns to identify drop-in replacements that a human might miss.

It’s not just about finding a part that fits the footprint; it’s about ensuring the replacement maintains the Signal Integrity, thermal behavior, derating margin, and compliance posture required for high-reliability sectors. That matters whether you are shipping an IoT node, a motor controller, or a life-adjacent medical monitoring device.

The interesting part is how a modern RAG stack does this without collapsing under the chaos of vendor PDF formatting. A datasheet corpus is not clean tabular data. It is a hostile environment of merged cells, rotated package drawings, scanned graphs, footnotes, multilingual warnings, and tiny superscripts that quietly redefine the electrical limits. That is where many AI demos fail. Spectacularly.

The practical architecture starts with ingestion. Manufacturer PDFs, PCNs, application notes, errata, qualification reports, and distributor parametric pages are pulled into a document pipeline. We then split the source into multiple retrieval layers rather than a single blob index:

Document-level storage for complete datasheets and revision history

Section-level chunks for absolute maximum ratings, recommended operating conditions, ordering information, and package data

Table-level extraction for electrical characteristics and pin definitions

Figure and note association so footnotes stay attached to the values they qualify

This is critical because engineering meaning often lives in the ugly edge cases. A regulator may appear acceptable until a footnote reveals the current rating only holds with a specific copper area and ambient condition. A microcontroller may look like a drop-in candidate until one package variant silently removes a peripheral bank. The number is there. The risk is hidden. The parser has to catch both.

A resilient RAG pipeline usually includes four stages. First, OCR and layout recovery reconstruct the physical structure of the PDF. Second, a document understanding model labels tables, headings, symbols, and notes. Third, a retrieval layer stores semantically meaningful chunks in a vector database plus a structured store for hard parameters. Fourth, the LLM reasoning layer answers engineering queries using both retrieved text and extracted fields.

In other words, the system does not ask a model to "read the whole datasheet" every time. That would be slow, expensive, and unreliable. Instead, it retrieves the exact slices of evidence needed to answer a sourcing question such as:

Is there a pin-compatible alternate with the same UVLO behavior?

Which approved op-amp alternatives maintain input offset drift across temperature?

Does the alternate package preserve assembly height and stencil assumptions?

Is the replacement actually qualified for the automotive temperature grade claimed by distribution listings?

That combination of semantic retrieval and hard parametric validation is where the metaphor of a digital metallurgist becomes useful. The model is not just searching words. It is interrogating the material reality of the part: the package mechanics, the electrical envelope, the thermal ceiling, the revision pedigree, and the sourcing trail.

At scale, the architecture also needs guardrails. We maintain source attribution at the chunk and field level, score extraction confidence, and quarantine ambiguous parses for engineer review. If a table row contains conflicting units, malformed minus signs, or image-based text with low OCR confidence, the system should not pretend certainty. It should escalate. This is how a serious pcb design consultant uses AI: not as a magic oracle, but as a force multiplier wrapped in evidence, validation, and skepticism.

For large libraries, hybrid retrieval works best. A semantic vector index finds conceptually similar content, while lexical retrieval catches exact strings like package codes, suffixes, or temperature grades. A graph layer can then connect manufacturer part families, approved alternates, and lifecycle notices across revisions. That means when a PMIC moves to NRND, the engine can trace sibling devices, package cousins, and previous substitutions used successfully in past projects. Fast. Explainably. With citations.

That is the difference between an AI workflow that generates pretty prose and one that actually protects a launch schedule.

Vectorizing the Datasheet: LLMs and Parametric Extraction

Static PDFs are terrible engineering databases. They were designed for human reading, not machine reasoning. Yet the most consequential facts in component selection still live inside them: ESR curves, timing limits, derating notes, moisture sensitivity levels, package dimensions, and qualification statements buried in annex tables. If those values stay trapped as visual ink on a page, your sourcing logic stays brittle.

The fix is to convert the datasheet into two linked representations: a semantic vector space for meaning, and a structured parameter store for hard engineering constraints. We do not choose one or the other. We need both.

The first layer is vectorization. After chunking a datasheet into coherent sections, we generate embeddings for each passage, table caption, note block, and package description. That makes phrases like "low-side gate driver with bootstrap supply" or "X7R capacitor with high ripple current tolerance" searchable even when the exact wording differs across vendors. The value here is conceptual retrieval. An engineer can search by function and behavior, not just by marketing taxonomy.

But vectors alone are not enough. A nearest-neighbor search may tell you two regulators are semantically similar while ignoring that one tops out at 28 V and the other is rated for 36 V. Catastrophic. So the second layer extracts parametric fields into normalized engineering records:

Voltage ranges

Current limits

Tolerance bands

Thermal resistance values

Package codes and dimensions

Qualification grades

Lifecycle indicators

Distributor and manufacturer cross-references

Each field is unit-normalized, source-linked, and confidence-scored. A value like 4.7 µF gets tagged with dielectric class, voltage rating, tolerance, and package size if present. A thermal metric such as θJA is tied back to the exact board conditions stated in the datasheet rather than stripped of context. That context preservation matters because numbers without test conditions are how bad substitutions sneak through.

The workflow typically looks like this:

Layout-aware PDF parsing identifies headings, tables, footnotes, and images.

Table extraction models reconstruct rows and columns from messy vendor formatting.

Entity extraction tags symbols, units, packages, and qualification language.

Normalization logic converts aliases and units into comparable forms.

Embeddings are created for semantic search across sections and product families.

Validation rules check the extracted values against known physical and catalog limits.

Once that is done, the LLM can answer harder questions with real discipline. It can retrieve every buck regulator section mentioning pulse-skipping behavior, then filter to devices with the right package, input voltage, and automotive grade. It can compare two op-amps by both semantic descriptions and extracted offset-voltage drift. It can identify when a "similar" alternate differs in exposed pad geometry and therefore threatens rework at the PCB Layout and Design stage.

This is also where integration with Altium Designer and KiCad becomes practical rather than aspirational. A symbol field can point to a normalized component record. That record can store approved manufacturer part numbers, alternate packages, package-height warnings, and sourcing resilience flags. During Schematic Capture, the engineer is no longer browsing dead PDF pages and half-trusted distributor listings. They are querying a living engineering index.

For a startup team moving fast, this reduces the manual datasheet reading that eats whole afternoons. For a regulated product team, it creates traceability. For a pcb design consultant, it becomes a repeatable methodology: the same extraction logic can be applied across customer projects, reviewed against IPC-driven design rules, and continuously improved as new parts and suppliers enter the corpus.

The result is not just searchable documentation. It is a machine-readable engineering memory. One that remembers what a part means, what it claims, and what constraints it quietly imposes on production.

The Physics of Automated Replacement Logic

How does an AI know a part is viable? It decomposes the part into engineering constraints instead of treating the manufacturer part number as sacred. Instead of looking at "Part A," the model looks at what the board truly needs:

Vgs(th), Rds(on), gate charge, and safe operating area for MOSFETs.

Equivalent Series Resistance (ESR), ripple current, dielectric behavior, and voltage derating for capacitors.

Propagation delay, rise/fall time, IO thresholds, and logic family compatibility for digital devices.

Package land pattern, height, orientation limits, and assembly compatibility for SMT replacement viability.

Qualification class, moisture sensitivity level, and distributor pedigree for risk control.

When a primary component is flagged as high-risk or End-of-Life (EOL), the engine scans distributor APIs, manufacturer PCNs, lifecycle databases, parametric catalogs, and internal project history. It then ranks replacements by electrical equivalence, footprint fit, qualification relevance, and sourcing durability.

The result? A BOM optimized for Manufacturability before the first copper trace is even laid. This is the Right Way to build hardware in 2026.

Technical Deep Dive: The Mathematics of a BOM Resilience Score

This is where the hand-waving has to stop. If you want a sourcing model that actually survives contact with reality, you need a quantifiable framework. We call that framework the BOM Resilience Score.

At the line-item level, each component receives a normalized risk-adjusted score:

[ BRS_i = w_s S_i + w_g G_i + w_l L_i + w_a A_i + w_q Q_i + w_c C_i ]

Where:

(S_i) = Supplier Diversity Score

(G_i) = Geographical Risk Score

(L_i) = Lifecycle Status Score

(A_i) = Availability and Lead-Time Score

(Q_i) = Qualification Fit Score

(C_i) = Counterfeit Exposure Score

(w_n) = weighting coefficients based on product class and industry

Then the total BOM-level resilience is not a simple average. That would be dangerously naive. A 200-line BOM can be destroyed by a single sole-source regulator. So the aggregate score must penalize critical single points of failure:

[ BRS_{BOM} = \sum_{i=1}^{N} \alpha_i BRS_i - \lambda \sum_{i=1}^{N} \left(1-D_i\right)K_i ]

Where:

(N) = number of BOM line items

(\alpha_i) = design criticality factor for component (i)

(D_i) = effective dual-source or multi-source factor

(K_i) = consequence-of-shortage penalty

(\lambda) = global penalty constant for fragility

In plain English: a jellybean resistor with five approved vendors barely moves the needle. Your PMIC, MCU, Ethernet PHY, isolated gate driver, or unusual sensor? Those parts dominate the risk landscape.

The Supplier Diversity Term

Supplier diversity is not just distributor count. It needs to reflect whether the part can be procured from authorized and independent channels, whether alternates are truly equivalent, and whether inventory is concentrated in a single broker ecosystem.

A practical form looks like:

[ S_i = \min\left(1,\ \frac{\log(1+n_{auth}+0.35n_{dist})}{\log(1+n_{target})}\right) ]

Where:

(n_{auth}) = number of authorized manufacturers or officially approved sources

(n_{dist}) = number of qualified distributor channels

(n_{target}) = target diversity count for full score

The logarithm matters because the difference between one and two real sources is huge. The difference between nine and ten is not.

The Geographical Risk Term

Geographical risk reflects the harsh physical reality of trade lanes, regional concentration, sanctions exposure, natural disaster risk, and chokepoint logistics. If your entire supply path runs through one geography, your schedule is one event away from chaos.

One useful model is:

[ G_i = 1 - \sum_{r=1}^{M} p_{ir} \cdot R_r ]

Where:

(p_{ir}) = fraction of sourcing exposure of component (i) in region (r)

(R_r) = normalized regional risk coefficient

(M) = number of exposed regions

If 90% of your supply sits inside one high-risk region, the score collapses fast. That is exactly what the model should do.

The Lifecycle Status Term

Lifecycle risk is one of the most underestimated killers in hardware. Teams see "active" and relax. They should not. Some parts are technically active but economically doomed, sitting on old process nodes or supported only because one legacy market still buys them.

A lifecycle score can be modeled as:

[ L_i = \beta_1 E_i + \beta_2 P_i + \beta_3 T_i ]

Where:

(E_i) = explicit lifecycle classification score, such as active, NRND, or EOL

(P_i) = probability of obsolescence inferred from PCN frequency and family attrition

(T_i) = projected years of supported availability relative to product life

For long-life products, (T_i) becomes brutal. A consumer-grade IC with a three-year horizon may be fine for a smart gadget. It is a disaster for medical or industrial platforms expected to survive for ten years plus.

Availability, Qualification, and Counterfeit Terms

The other terms close the loop:

Availability (A_i) blends current stock, rolling lead time, MOQ stress, and price volatility.

Qualification fit (Q_i) checks whether the part satisfies AEC-Q100, medical documentation expectations, temperature grade, and test pedigree.

Counterfeit exposure (C_i) drops when supply depends on grey-market inventory, relabeled components, or unverifiable chain-of-custody data.

That last term matters far more than most teams admit. If your replacement strategy relies on mystery reels from a broker with fuzzy paperwork, the board may assemble. It may even boot. But reliability confidence has already been compromised.

Integration with Altium Designer and KiCad During Schematic Capture

This is where the workflow stops being theoretical and starts saving schedules. AI-driven BOM scrubbing is most valuable when it appears during schematic capture, not two days before procurement. The closer the alert sits to the engineer’s point of selection, the less painful the correction.

In Altium Designer, the integration model is straightforward. Each schematic symbol or managed component can carry manufacturer part numbers, approved alternates, distributor links, lifecycle flags, and internal qualification notes. A BOM intelligence layer polls those attributes in near real time and overlays sourcing health directly in the component properties or BOM panel.

That means while placing a regulator or Ethernet switch, the engineer can see alerts like:

Lead time spike detected

Lifecycle changed to NRND

Single-region inventory concentration

Non-authorized inventory dominating available stock

Suggested drop-in alternate available

The same principle works in KiCad, even though the ecosystem is more open and modular. Custom fields in symbols, library tables, and BOM export scripts can feed a sourcing engine that checks approved MPNs against live data. Instead of discovering a supply-chain landmine after layout, the engineer sees it while still editing the schematic.

This connects naturally with automated schematic design and workflows like PCBSchemaGen. The real win is not automation for its own sake. It’s automation that prevents you from designing around a component that is already halfway out the door.

The Chemistry of Resilience: Identifying EOL Risks

Component obsolescence is a chemical and economic reality. Fabrication processes evolve, silicon wafers shift to newer nodes, passive formulations change, and older lines are retired to free capacity for more profitable parts. For companies in industrial automation or automotive engineering, where product lifecycles span years or even decades, this EOL risk is a ticking time bomb.

Our Manufacturing Support services use generative tools to conduct deep-dive audits of your BOM. We do not just say a part is going away. We map the replacement path:

Tier 1: Direct drop-in replacement with matching footprint, fit, and critical specifications.

Tier 2: Functional equivalent requiring minor library, layout, or sourcing updates.

Tier 3: Architectural pivot requiring schematic edits and a more strategic redesign.

By identifying these ramifications early in the PCB Layout and Design phase, we reduce the rework that kills budgets and spirits alike.

Industry Deep Dive: Medical Component Longevity

Medical hardware teams live in a different risk universe. Even for devices that are not implantable, validation cycles are expensive, documentation is rigid, and replacement of a qualified part can trigger painful reverification. This makes component longevity a first-class BOM parameter, not a nice-to-have.

The wrong move is selecting a flashy new IC with sparse long-term availability history because it looks attractive on a parametric search. The right move is choosing components with documented lifecycle stability, strong manufacturer pedigree, repeatable lot traceability, and a proven field track record.

For medical BOM optimization, the model weights lifecycle and documentation harder:

Longer availability projections

Lower PCN churn

Clear traceability back to authorized channels

Stable packaging and revision history

Conservative second-source planning before release

This is also where cross-functional alignment matters. Your electrical design may be elegant, but if a critical ADC or PMIC disappears during a production run, validation schedules stretch, procurement scrambles, and regulatory paperwork starts breeding in dark corners. It is avoidable. If you want a more robust partner at the layout and manufacturability level, this is exactly where an experienced PCB design consultant earns their keep.

Industry Deep Dive: Aerospace Counterfeit Mitigation

Aerospace and defense projects cannot treat BOM resilience as a mere lead-time problem. Counterfeit infiltration is the nightmare scenario. The part may look correct, mark correctly under a cursory inspection, and still be electrically suspect, reclaimed, remarked, or internally nonconforming.

This is why counterfeit mitigation belongs inside the sourcing model itself. If availability suddenly appears from non-authorized channels while authorized inventory is dry, that is not good news. That is a risk flare.

The AI-driven workflow flags:

Abrupt pricing discontinuities

Inventory appearing only through brokers

Manufacturer marking inconsistencies

Package image mismatches

Lot-code anomalies

Datasheet and received-part discrepancies

These checks complement inspection workflows such as Deep Learning for Precision Solder Inspection, because robust hardware quality does not start at solder fillets. It starts with confidence that the component itself is authentic.

Industry Deep Dive: Automotive and AEC-Q100 Sourcing

Automotive programs bring their own flavor of pain. Here, the keyword is not just availability. It is qualification continuity. A drop-in part that lacks the correct AEC-Q100 grade, temperature range, PPAP support, or sourcing consistency is not a valid substitute, no matter how tempting the stock level looks.

That means the scoring engine has to distinguish between "electrically similar" and "program-eligible." They are not the same thing.

For automotive BOMs, the AI should verify:

AEC-Q100 or relevant passive qualification status

Operating temperature grade

Vendor quality history

Stable packaging and reel format for assembly lines

Alternate parts with matching automotive documentation

Consistency across approved manufacturing sites

This is where engineers often get trapped by a common reflex: trying to rescue a fragile BOM with a spreadsheet and a lot of optimism. The spreadsheet grows. The confidence shrinks. The requalification burden multiplies. The better path is to encode those qualification rules directly into the replacement logic from the start.

Bridging the Gap to Production

The true cost of a component error is not the unit price delta. It is the cost of engineering time, lost schedule, respins, procurement firefighting, excess inventory, and missed market windows.

At Circuit Board Design, our process is built to eliminate those friction points. We integrate AI-driven BOM scrubbing directly into our Verification and Testing and Validation workflows so every design is evaluated not just for electrical correctness, but for real-world producibility.

The Wrong Way (Manual) | The Right Way (Generative) |

|---|---|

Checking stock at the end of the design cycle. | Continuous supply chain monitoring during Schematic Capture. |

Relying on favored parts regardless of lead times. | Data-driven selection based on a BOM Resilience Score. |

Panic-buying components from grey-market brokers. | Identifying verified drop-in replacements from authorized distributors. |

Manual cross-referencing of datasheets for hours. | Instant technical comparison via AI-assisted parametric and datasheet analysis. |

Treating compliance as a late-stage procurement issue. | Embedding industry-specific sourcing rules from the first symbol placement. |

From Nightmare to Seamless Execution

Hardware development does not have to be a gauntlet of panic emails and last-minute redesigns. Supply-chain uncertainty has been treated like a rite of passage for too long. It does not need to stay that way.

With Generative BOM Optimization, your BOM becomes less brittle, more explainable, and far more actionable. A shortage in one region can trigger a ranked list of approved alternates. A lifecycle downgrade can generate an engineering review before layout is frozen. A suspicious supply signal can be flagged before purchasing gets cornered into a bad decision.

That is what confidence looks like. Not blind optimism. Engineered resilience.

Whether you are a hardware startup building an MVP, an OEM managing recurring builds, or a regulated product team navigating long validation cycles, the goal is the same: performance, reliability, and manufacturability. We bridge the gap between your conceptual design and the harsh physical reality of sourcing, assembly, and production.

FAQ

How is a BOM Resilience Score different from a normal sourcing check?

A normal sourcing check is a snapshot. A BOM Resilience Score is a model.

A basic check tells you whether a part is available today. A resilience score evaluates whether the part is likely to remain a safe choice across lead-time shifts, lifecycle changes, regional concentration, counterfeit exposure, and qualification constraints. It is the difference between seeing inventory and understanding risk.

Can AI really identify safe drop-in replacements?

Yes, but only when the model is constrained by engineering rules.

A useful system compares package compatibility, electrical limits, qualification status, thermal behavior, and supply-chain pedigree. It should not blindly substitute by keyword similarity. The right workflow keeps an engineer in review while letting AI do the heavy scanning and ranking.

Does this work with Altium Designer and KiCad?

Yes. Both can support real-time or near-real-time BOM intelligence.

In Altium Designer, managed component data and BOM panels make it easier to surface sourcing alerts directly inside the design flow. In KiCad, custom symbol fields, scripted BOM exports, and library metadata can feed the same kind of analysis. The key is tying schematic component choices to live sourcing data early.

Why is counterfeit mitigation part of BOM optimization?

Because a part that is available from the wrong channel is not really available.

If your sourcing path depends on unverifiable brokers, mismatched markings, or weak chain-of-custody data, your risk is no longer just schedule-related. It becomes a quality and reliability problem. For aerospace, defense, industrial, and medical hardware, that is unacceptable.

What industries benefit the most from resilient BOM engineering?

Any hardware team benefits, but regulated sectors feel the pain fastest.

Medical devices need longevity and documentation stability. Aerospace and defense need authenticity and traceability. Automotive programs need qualification continuity such as AEC-Q100 support. Even consumer and IoT products benefit because supply volatility can wreck launch timing and margins.

Secure Your Design's Future

Don't let your next project become a cautionary tale of what could have been. The tools to build a resilient, AI-optimized hardware product are here. At Circuit Board Design, we combine deep expertise in IPC-2221 design practice, real fabrication rule validation, and modern AI-assisted workflows to help you reach manufacturing with fewer nasty surprises.

Your vision deserves a foundation that will not crumble at the first sign of a supply-chain ripple. Let's build something that lasts.

Ready to optimize your next project? Contact our engineering team today to learn how our Manufacturing Support and PCB Design Services can bulletproof your supply chain. Explore our Process or read more about solving DFM issues before they start.